Because Hyperconverged Infrastructure (HCI) and Software Defined Storage (SDS) have failed to live up to their promises, most IT leaders assume there is nothing they can do about the high cost of dedicated storage. A recent IDC study indicated that over 50% of IT planners ready for a storage refresh consider HCI, but they rule it out the majority of the time. As a result, three-tier architectures continue to be the default architecture for most data centers.

The Problem with Dedicated Storage Architectures

Dedicated storage architectures, either a storage area network (SAN) or a network-attached storage (NAS) system, are expensive to purchase, maintain and refresh. The primary problem is the software that drives the storage products. It is laden with features that are inefficiently implemented. As the product matures, additional features are often “tacked on,” making them even more inefficient.

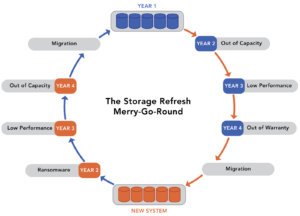

These inefficiencies mean that vendors must configure the storage hardware that comes with their storage software in such a way that it can mask all of its inefficiencies. This compensation dramatically increases the total solution’s cost significantly as the customer must pay for the additional computing power and memory. These inefficiencies also lead to the inexplicably short life span of storage infrastructure. It forces customers to go through a costly refresh cycle every four to five years and make costly upgrades or deploy additional storage silos as new workloads come online.

Software Defined Storage is Still a Dedicated Storage Architecture

Software Defined Storage (SDS) has the same problem as dedicated storage architectures. The software is often inefficient; you must still buy hardware and dedicate it to storage. Most SDS solutions make you buy new hardware to go with their software. They can not take advantage of your existing hardware investment.

Dedicated Storage Shouldn’t Exist

Beyond compensating for the inefficiencies of dedicated storage hardware and SDS, the reality is that the storage software that drives dedicated arrays and NAS systems, runs on the same server type that you would run a hypervisor or any other application on. That server has built-in networking, and in most cases, it has 24 or more bays for storage media. The same applies to most network hardware. They are servers running a specific application.

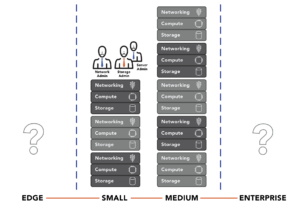

Buying multiple servers to do different things when in actuality, one server armed with efficient software could do it all, dramatically increases CAPEX and OPEX. The cost to manage and maintain this stack of at least three different servers is untenable. The result is a complex environment that requires storage, virtualization, and networking specialists, instead of a single IT generalist, which further raises costs. Converging these three separate software stacks is the impetus behind Hyperconverged Infrastructure (HCI).

Why HCI Didn’t End Dedicated Storage Architectures

2010 when HCI first came to market, it seemed like it was a sign of the end for dedicated storage architectures, but that never materialized. Over a decade after the first HCI solutions appeared, dedicated storage architectures are more prevalent than ever. Industry pundits like Chris Mellor label HCI a “niche market.” How could such an obvious choice for consolidating storage, virtualization, and networking, both hardware and software, not take over the market?

The first problem with HCI solutions is they didn’t converge anything. Yes, the storage and virtualization software, and in rare cases, the network software, run on the same server hardware. However, each software package has an entirely different code base and does not know that the other packages exist. There are no gains in management efficiencies as a result.

Each of the three (at least) code bases within HCI is also very inefficient because it was initially designed to run on dedicated hardware, not share hardware resources with other modules. Finally, in most cases, the storage and network software run as virtual machines within the hypervisor construct.

These realities result in HCI being deemed a good solution for medium-sized businesses. Still, as these infrastructures scale to address the demand of larger businesses and enterprises, inefficiency is stacked on top of inefficiency, making effective utilization of the available resources a significant problem. None of the HCI vendors went to work on the core HCI challenges:

- Storage and network operations must be equal citizens with virtualization, not run as VMs.

- An HCI cluster’s east-west traffic (node-to-node communications) is significant and must be specifically optimized.

- Optimizing operational efficiency and simplifying administration requires a unified code base, not three code bases from three different vendors that are glued together by a management interface.

There is also an economic problem with HCI infrastructures. In theory, running the entire infrastructure stack on one tier instead of three tiers should be less expensive. However, HCI is almost always the same price as or more expensive than the three-tiered architectures it attempts to replace. These HCI designs require even more powerful servers with even more memory and there are still three separate licenses (storage, virtualization, and networking) that must be paid for and bundled into the solution. The final straw is that most HCI solutions require you to purchase new hardware, and often, conveniently, from the HCI vendor, further increasing the price.

The result is that HCI has no operational savings or economic advantages over the traditional three-tier model.

Ultraconverged Infrastructure Eliminates the High Cost of Dedicated Storage

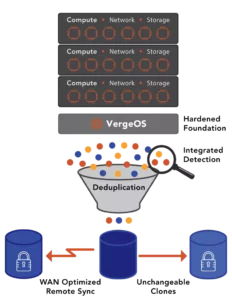

VergeIO’s Ultraconverged Infrastructure (UCI) eliminates the high cost of dedicated storage by overcoming the shortcomings of SDS and HCI. VergeOS is written from scratch to combine virtualization, storage services, and layer 2 and layer 3 network functionality into a single unified code base. With VergeOS, storage, and networking are equal citizens to the hypervisor, not servants. The result is a solution that is a fraction of the code size as other solutions but provides superior features. VergeOS is typically 50% less expensive than the equivalent VMware licenses which usually include all the functionality of its add-on packages like vSAN, NSX, and vCloud Director.

VergeOS can run on just about any server hardware built within the last six years, and it will deliver better performance and longevity from that existing hardware. This hardware flexibility enables you to leverage your existing servers and enjoy additional cost savings instead of being forced into buying new servers and spending more money.

Regarding storage, VergeOS uses drives installed in the same servers running the virtualization and networking functions. Our efficiency ensures that all three functions run at top performance and do not require additional processing power or memory. The VergeOS license is not capacity based; it is priced per node so that IT can put as much capacity as possible in each node. Adding drives to an existing server instead of buying a dedicated storage system is an order of magnitude less expensive.

Global Inline Deduplication is built into the very core of VergeOS and has been from day one. It was not an afterthought added years later. The result is deduplication has no impact on performance. Since deduplication is integrated into the core of VergeOS, it drives many of our advanced features like IOclone, our answer to snapshots, IOprotect for disaster recovery, and IOfortify, our solution for rapid ransomware recovery. Again, all of these features are built into the core of the VergeOS software, not add-on modules.

Many potential customers are reaching out to us as a no-compromise alternative to VMware. Still, the compelling capabilities of VergeOS mean many others reach out to us as part of a NAS or SAN refresh project because the savings we can provide over the high cost of dedicated storage are even more significant than the savings we can provide versus VMware alone. Then add the operational savings of a truly converged infrastructure, and you’ll see why we have incredibly high customer satisfaction.