GPU Infrastructure Without the Complexity

Your IT team already manages compute, storage, and networking in VergeOS. Now they manage GPUs the same way — from the same interface, with no specialists required.

Pass-through · vGPU · MIG

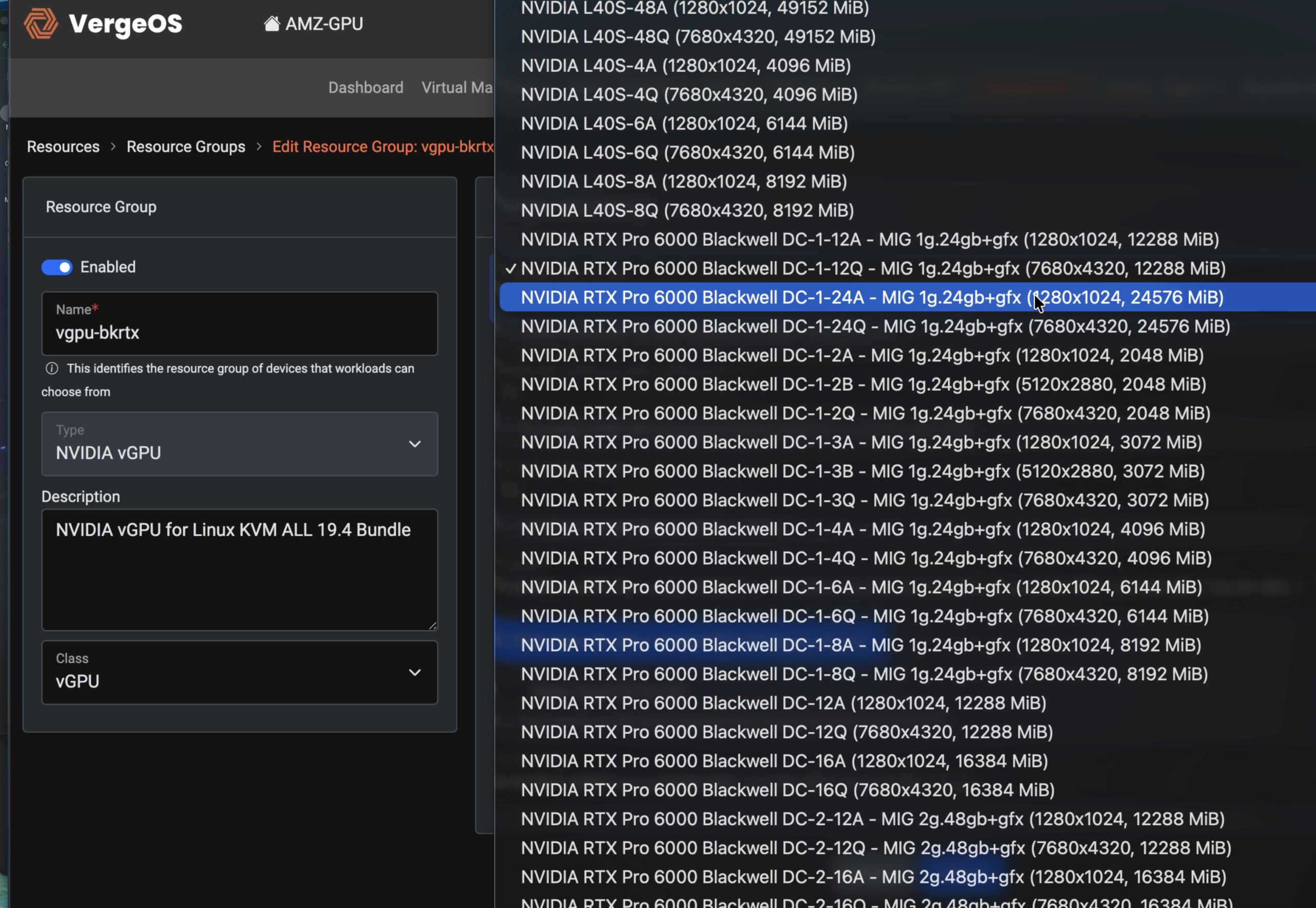

Point-and-click MIG configuration

RTX Pro 6000 with MIG + time slicing

VergeOS handles bundling and deployment

The Expertise Gap Is the Real Barrier to GPU Adoption

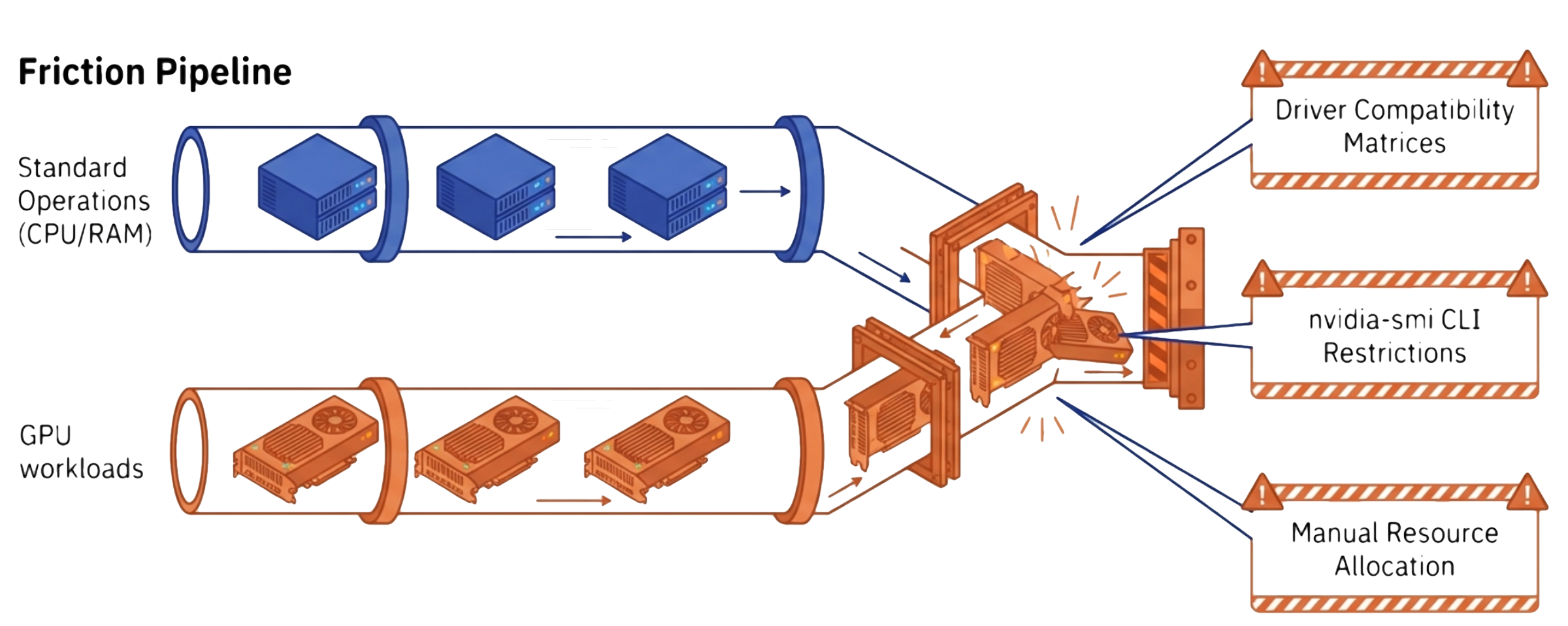

GPU hardware is accessible. Budget is there. The question every IT team faces is the same: who manages this?

Driver compatibility across hypervisor versions. MIG partitioning via command line. GPU-aware DR workflows. None of this is in the standard sysadmin skill set — so organizations hire GPU specialists, engage consultants, or defer the project entirely.

Modernizing and Simplifying GPU Resource Management

Three Pillars of the VergeOS GPU Approach

Simplicity at Scale

GPU management — pass-through, vGPU, and MIG — through a single point-and-click interface. Full REST API for programmatic control. The nvidia-smi command line is never required.

GPU as Infrastructure

GPUs are first-class VergeOS resources — scheduled, monitored, snapshotted, and replicated alongside compute and storage. Drivers uploaded once, automatically deployed bit-for-bit to every guest VM.

Validated with NVIDIA

NVIDIA introduced VergeOS as a supported vGPU platform. VergeOS 26.1.3 validated with vGPU 20. Drivers deployed bit-for-bit identical to NVIDIA’s release. Joint support paths — both vendors stand behind your deployment.

What Your Team Can Deploy Today

Engineering Simulation and Scientific Visualization

Engineers, designers, and scientists running CFD simulation, mesh preparation, real-time flow visualization, and scientific rendering need workstation-class GPU performance in virtual environments. VergeOS delivers those capabilities through the same point-and-click interface that manages the rest of the infrastructure — no dedicated workstation hardware and no GPU specialists required.

GPU-Accelerated VDI

Engineers, designers, and analysts get workstation-class GPU acceleration without dedicated hardware. VergeOS integrates with Inuvika OVD Enterprise for full application virtualization — provisioned from the same interface as standard desktops.

On-Premises AI Inference

Organizations with sensitive designs, regulated records, or competitive research that cannot leave the building run inference on-premises to maintain data control. VergeOS hosts these workloads in GPU-enabled VMs with MIG isolation — each service gets guaranteed compute and memory inside your security boundary.

Shared GPU Dev Environments

Split a single high-end GPU into MIG instances for multiple data science teams. Hardware-enforced isolation means no noisy neighbors and no contention. Reconfigure as project requirements shift — no command line needed.

Edge AI Deployment

VergeOS deploys to edge locations with the same GPU capabilities as your data center. Manufacturing QC, retail analytics, healthcare imaging — real-time inference where cloud round-trips won’t work.

Three GPU Modes. One Interface.

VergeOS supports all three NVIDIA GPU virtualization modes as native capabilities. Select the right model for each workload — VergeOS handles configuration, driver management, and the full operational lifecycle.

| Mode | Best For | GPU Sharing | Isolation | Licensing |

|---|---|---|---|---|

| GPU Pass-Through | Full-GPU simulation, scientific compute, bare-metal workloads requiring dedicated access | None — one VM per GPU | Complete | None |

| NVIDIA vGPU | VDI, multi-user inference, developer GPU access | Time-sliced across VMs | Software-level | vGPU License Required |

| Multi-Instance GPU (MIG) | Multi-tenant isolation, regulated workloads, guaranteed SLAs | Hardware-partitioned slices | Hardware-enforced in silicon | None beyond hardware |

| MIG + Time Slicing | Maximum VM density with per-tenant isolation | MIG isolation + time slicing within each instance | Hardware-enforced (MIG tier) | vGPU License Required |

NVIDIA vGPU software licenses are required for vGPU operation and are available through NVIDIA-authorized partners.

Supported. Validated. Joint Support.

NVIDIA introduced VergeOS as a supported vGPU platform. Both vendors stand behind your deployment — when GPU issues arise, VergeOS and NVIDIA engineering collaborate with no finger-pointing and no coverage gaps.

A100, A30, A40, L40 series — full vGPU operation confirmed in VergeOS 26.1.3. RTX Pro 6000 Blackwell Server Edition — MIG vGPU validated.

Customers obtain NVIDIA GPU drivers (available with valid vGPU licenses) and upload once. VergeOS bundles them into an ISO and auto-attaches to guests on GPU assignment. Deployed drivers are bit-for-bit identical to NVIDIA’s release.

VergeOS 26.1.3 validated with vGPU 20 — the March 2026 major release. Includes support for the RTX 4500 Blackwell Server Edition tier and MIG + time slicing on KVM.

NVIDIA field engineers evaluating VergeOS cited automated MIG configuration as a standout differentiator: “That’s huge” — direct reaction to MIG configuration in the VergeOS UI.

GPU Virtualization Without the Complexity

Join Aaron Richman and NVIDIA’s Jimmy Rotella for a live walkthrough of vGPU, pass-through, and MIG in a unified private cloud environment. See MIG configuration in the UI, one-time driver upload, and GPU-aware DR — no CLI, no specialist required.

Ready to See VergeOS GPU Management in Action?

Schedule a technical deep dive to see how VergeOS handles GPU pass-through, vGPU, and MIG on your existing infrastructure — managed by the same team that runs your VMs today.

855-855-8300 · verge.io