VergeOS adds CSI, Cloud Controller Manager, Cluster Autoscaler, and Rancher node driver as native Helm charts, changing the Kubernetes VMware exit math by collapsing three licensing taxes into one platform decision.

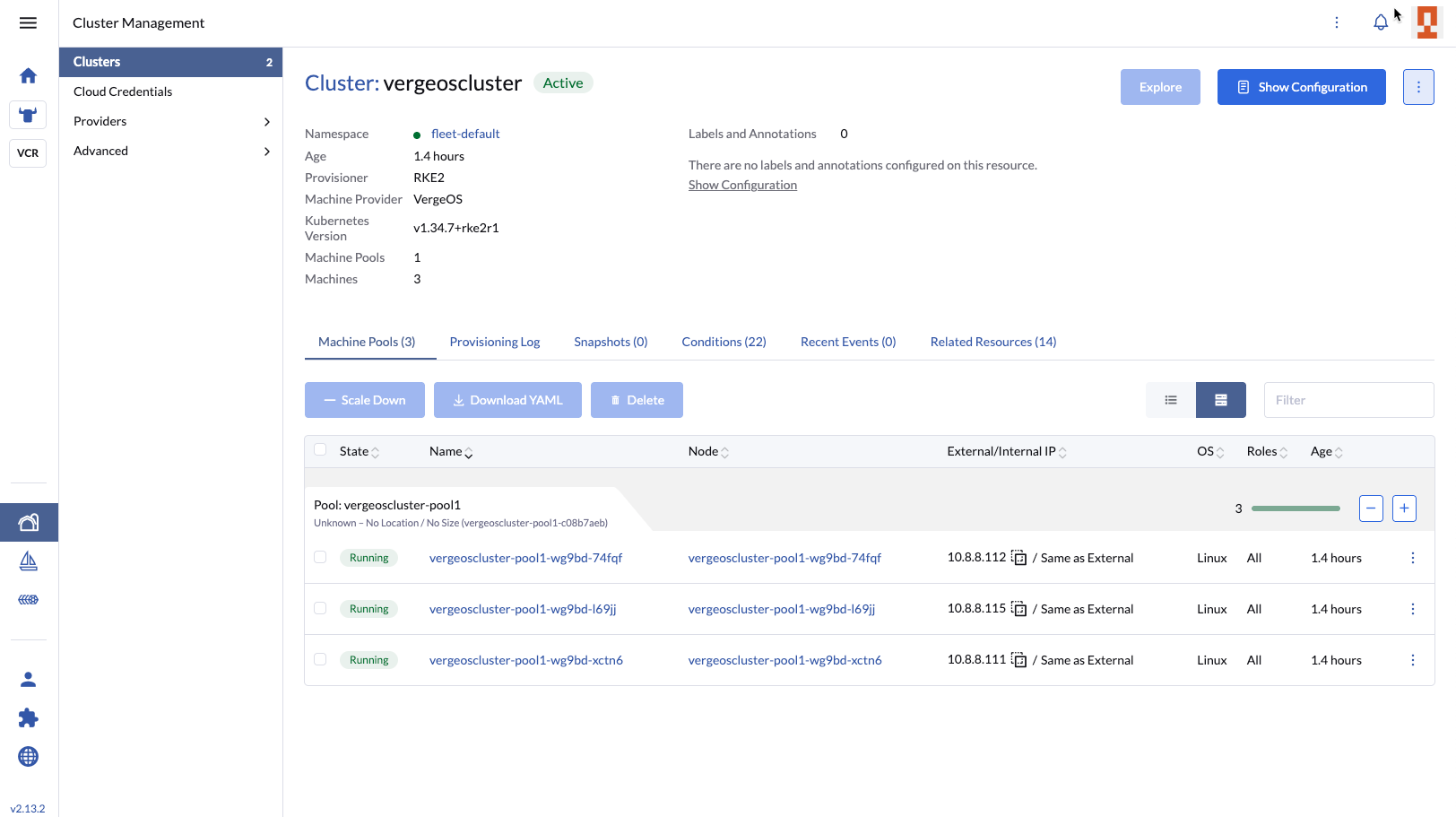

VergeIO this week announced general availability of Kubernetes support in VergeOS, and the release changes the Kubernetes VMware exit math for IT. It ships as four Helm charts on the verge-io GitHub repository, distributed as an integrated layer that delegates storage, networking, autoscaling, and node provisioning to the VergeOS API. The full announcement sits on the verge.io press release page.

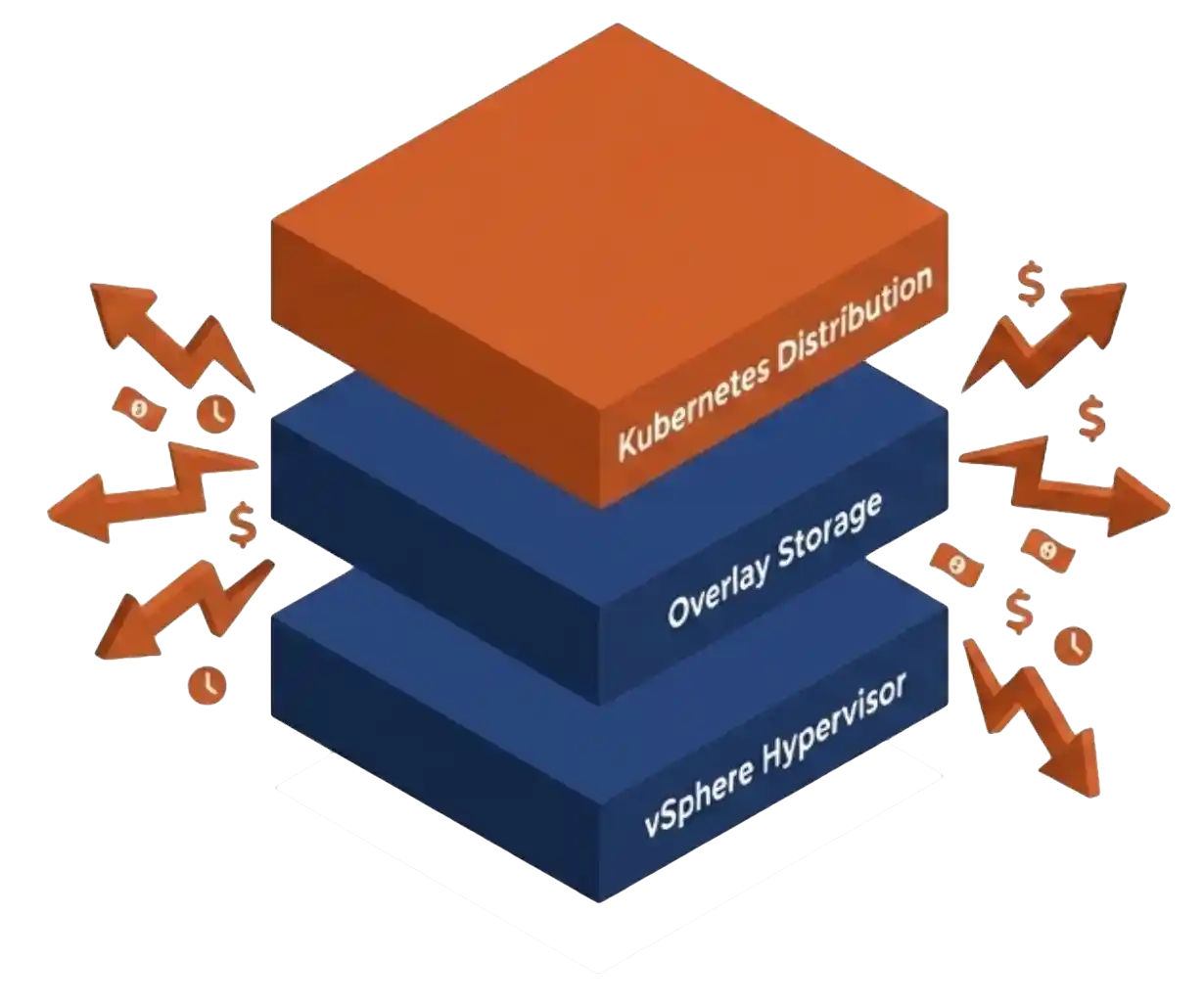

This release changes the Kubernetes VMware exit math for IT teams. Those teams pay three separate vendors to do one job. They pay Broadcom for vSphere licensing on the cluster nodes. They pay a Kubernetes distribution fee to Tanzu, OpenShift, or Rancher Prime. Many pay a third bill for overlay storage like Longhorn or Portworx, since vSphere storage policies do not extend cleanly into Kubernetes without commercial Tanzu add-ons.

The companion datasheet walks IT through what each tax costs in operational time, not just in licensing spend. The argument is direct. Most VMware exits in flight today swap the hypervisor and leave the rest of the orchestrated stack in place. That move trims the licensing line and preserves the coordination cost across compute, storage, and the Kubernetes management plane for another three budget cycles.

Key Takeaways

- VergeOS ships native Kubernetes support as four Helm charts on the verge-io GitHub repository. Rancher remains the management plane.

- VMware shops running Kubernetes pay three separate licensing taxes for the same workload, and the coordination cost across them runs higher than the licensing line.

- A hypervisor swap addresses one tax. The Private Cloud OS pattern collapses all three into a single platform contract.

- The integration was validated in production with NGAMING and Nesine, the design partner from Türkiye’s digital entertainment and gaming sector.

- CNCF 2025 reported Kubernetes production adoption at 82%, the highest in the survey’s history. Stateful workloads are now the majority case.

The Three Taxes Behind the Kubernetes VMware Exit Math

The first tax is vSphere itself. Broadcom moved the model from perpetual licensing to subscription in late 2023. The April 2025 minimum license purchase climbed from 16 cores to 72 cores, forcing smaller VMware customers to pay for capacity that did not match their workload. Reports of price increases between 150% and over 1,000% are well documented for customers caught by the 72-core floor.

The second tax is the Kubernetes distribution. Tanzu Kubernetes Grid, OpenShift, and Rancher Prime all charge against the same nodes that already pay for vSphere. The fee scales independently of the platform underneath. It renews on its own calendar with its own support relationship and its own integration matrix.

The third tax is overlay storage. vSphere does not project storage policies cleanly into Kubernetes without commercial Tanzu add-ons. Teams adopt Longhorn, Portworx, OpenEBS, or Rook. The overlay layer runs as a duplicate of the underlying vSAN, doubling the metadata, doubling the snapshot tooling, and doubling the management overhead. The CSI calls land on the overlay, the overlay translates to vSAN, and the team owns the contract between them.

All three taxes feed into the VMware exit math IT operations inherits when procurement signs three contracts. The licensing line is the visible cost. The coordination across compute, storage, and the Kubernetes management plane is the cost procurement does not see.

Key Terms

What VergeOS Kubernetes Support Looks Like

VergeIO is not introducing a Kubernetes distribution. The support layer assumes customers already run a distribution they trust. RKE2, K3s, vanilla upstream, and vendor distributions all work. The four Helm charts deliver the contracts Kubernetes needs from a platform underneath.

The CSI driver supports two backends. The NAS backend serves EXT4 volumes over NFS for ReadWriteMany access. The block backend hotplugs vSAN block drives directly to VergeOS VMs for ReadWriteOnce access. A persistent volume snapshot becomes a vSAN snapshot, so stateful workloads share the same DR and replication infrastructure that protects production VMs.

The Cloud Controller Manager populates node metadata and handles LoadBalancer service provisioning through VergeOS VNet NAT rules. Most clusters do not need MetalLB or an external load balancer. The Rancher node driver and UI extension finish the picture. An operator who provisions Rancher clusters on vSphere provisions Rancher clusters on VergeOS with the same flow. Rancher stays as the management plane the team already uses, which shifts the Kubernetes VMware exit math in the operator’s favor before the migration starts.

Comparison: Conventional Stack vs. VergeOS

| Conventional Stack | VergeOS | |

|---|---|---|

| Hypervisor | VMware vSphere ESXi | VergeHV |

| Distributed storage | vSAN, dedicated SAN/NAS | VergeFS |

| Kubernetes distribution | TKG, OpenShift, Rancher Prime | Customer’s existing choice |

| Management plane | Tanzu Mission Control, Rancher | Rancher continues |

| K8s storage | vSphere CSI plus overlay | Native VergeOS CSI |

| LoadBalancer | NSX Adv LB, MetalLB, F5 | Cloud Controller Manager |

| Snapshot scope | Per VM, per overlay, fragmented | Per VDC or per PV, single platform |

Why the Kubernetes VMware Exit Math Matters to IT

The real VMware exit math is not in the licensing line. It is in the coordination time IT teams pay across three vendors. Procurement signs three contracts on three renewal calendars. Operations runs three upgrade matrices. Support escalations route to three vendors, and the integrations between them sit in nobody’s contract.

CloudBolt’s January 2026 survey of 302 enterprise IT decision-makers found 86% actively reducing their VMware footprint. Only 4% reported a full migration. The mass exodus is not happening. The slow unwind is, and the projects in flight are the ones that matter for the next three budget cycles.

The CNCF 2025 survey put Kubernetes production adoption at 82%, the highest in the survey’s history. The 2024 report found 74% of organizations use containers for stateful applications in production. Stateful workloads are now the majority case, and the upstream Kubernetes specification does not provide the data protection semantics those workloads need. They get those semantics from the storage substrate underneath.

The argument from VergeIO is direct. The right exit from VMware-plus-Kubernetes is a Private Cloud OS, not another hypervisor. Collapsing the three taxes into one platform changes the Kubernetes VMware exit math for IT. The migration runs through Rancher, with workloads moving on the customer’s timeline.

Frequently Asked Questions

Does VergeOS replace Rancher?

Is there a separate license for the Kubernetes support layer?

What happens to existing TKG clusters during migration?

Does the CSI driver support both block and file workloads?

Where can I read the full announcement?

Next Steps

The full announcement, the architectural argument, the technical overview, the live demonstration, and the verge-io Helm chart repository are one click away.