Kubernetes Without the VMware Tax

VMware shops running Kubernetes pay three taxes at once. VergeOS collapses all three without disrupting Rancher. The control plane stays. The substrate changes. Workloads move on your timeline.

VMware shops pay three vendors to do one job.

vSphere hosts the cluster nodes. A Kubernetes distribution manages them. An overlay storage layer gives them persistent volumes. Three contracts, three operational models, three renewals on three different calendars.

The Status Quo

- vSphere licensing for every cluster node, subscription-only after Broadcom restructuring.

- Kubernetes distribution tax on top — Tanzu, OpenShift, Rancher Prime, or engineering hours for upstream.

- Overlay storage like Longhorn, Portworx, or OpenEBS bolted on because vSphere policies do not extend cleanly into Kubernetes.

- Two operational models in one stack — vCenter for infrastructure, declarative GitOps for clusters, with friction at the boundary.

- Renewals compound across all three line items each cycle.

VergeOS Collapses It

- One platform handles compute, storage, and networking. The vSphere line item disappears.

- Rancher stays the management plane. Application teams see no change in how they consume Kubernetes.

- Native CSI driver delegates storage operations directly to vSAN. Provisioned volumes get inline dedup, multi-tier placement, and integrated snapshots.

- Cloud Controller Manager provisions LoadBalancer services through VergeOS VNet NAT rules. No external load balancer to manage.

- Phased migration friendly. New clusters land on VergeOS. Old clusters stay on vSphere. Rancher manages both.

Three starting points. One platform decision.

Most teams reach this conversation from one of three places. VergeOS plus Rancher fits all three without forcing a single migration narrative.

VMware Exit with Rancher Continuity

Keep your Rancher control plane unchanged. VergeOS replaces vSphere as the substrate. Application teams see no change. Platform teams see the vSphere line item disappear.

Tanzu Replacement with Phased Migration

TKG environments facing Broadcom roadmap uncertainty plus bundled licensing pressure. Migration is a project, not a port. VergeOS plus Rancher absorbs the work.

Operational Uplift for Bare-Metal Clusters

Teams running Kubernetes directly on hardware gain live migration, integrated DR, and a shared snapshot model without changing their Kubernetes operations workflow.

Same Rancher. Same applications. New substrate.

The Kubernetes control plane and the application layer stay constant. What changes is the substrate underneath — and the overlay storage that no longer needs to exist.

× Before · VMware Stack

✓ After · VergeOS Stack

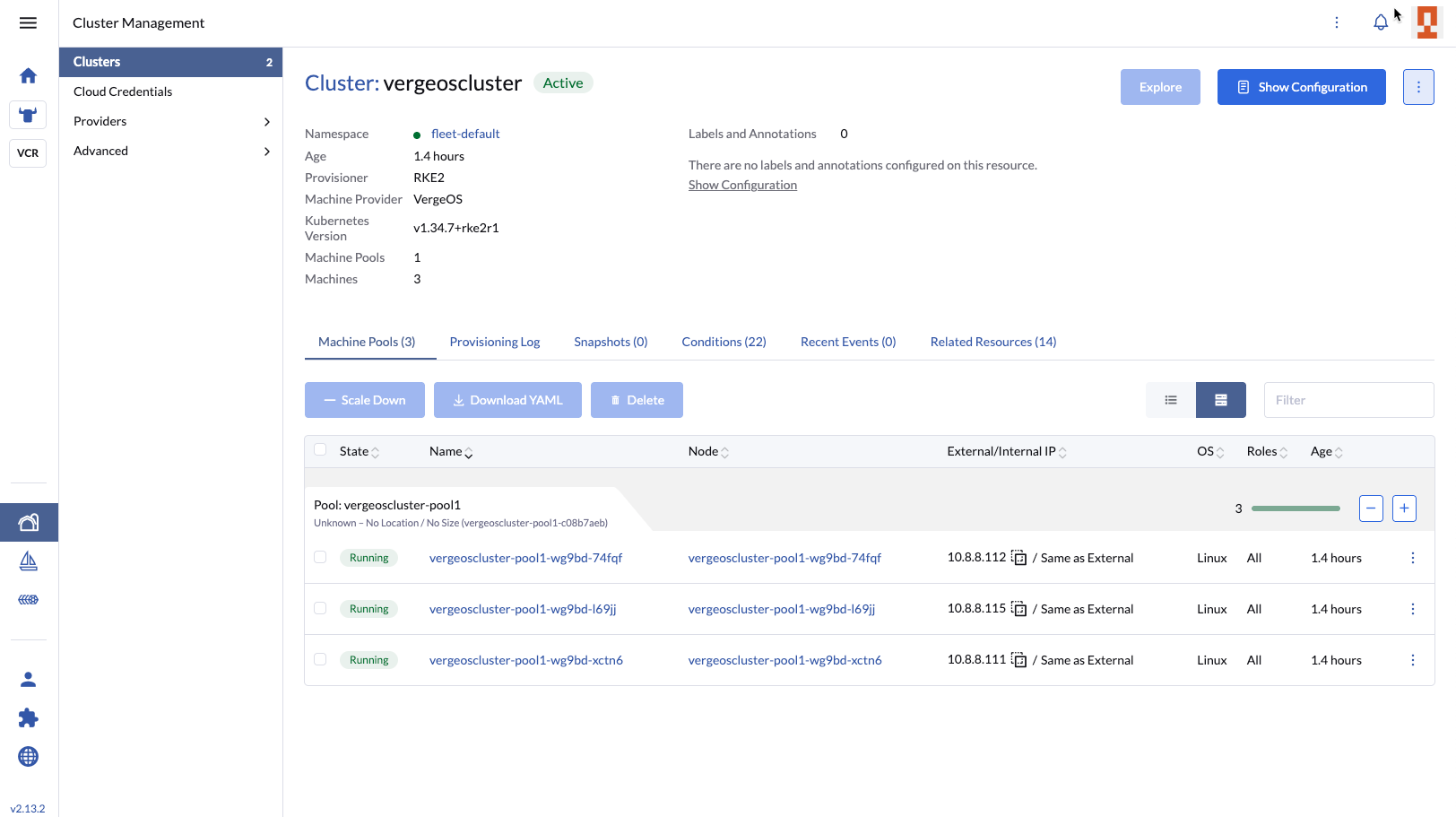

Watch Rancher provision a cluster on VergeOS in real time.

David Zarzycki, the principal engineer who built the Rancher integration, runs a live demonstration of end-to-end cluster provisioning. He walks through node driver behavior, CSI volume operations, Cloud Controller Manager LoadBalancer provisioning, and the Cluster Autoscaler responding to real workload demand. George Crump frames the three-tax argument. Aaron Richman hosts.

What you’ll see:

- Rancher node driver provisioning a fresh RKE2 cluster on VergeOS

- CSI driver creating a persistent volume backed by vSAN

- Cloud Controller Manager allocating a LoadBalancer service through VergeOS VNet

- Cluster Autoscaler scaling a node pool based on pod resource requests

- Live Q&A with audience-driven roadmap polling

Live Demonstration

Four steps from Helm install to running clusters.

No proprietary tooling. No new operational model. Standard Kubernetes contracts the whole way through.

Install the Helm Charts

Pull the CSI driver, Cloud Controller Manager, Cluster Autoscaler, and Rancher node driver from the verge-io GitHub repository. Helm install into your existing Rancher instance.

Configure VergeOS Credentials

Add VergeOS as a cloud credential in Rancher. The UI extension surfaces VergeOS-specific configuration forms — the same flow Rancher uses for vSphere.

Provision Clusters

Use Rancher’s standard cluster creation flow. The node driver clones VergeOS template VMs and registers them as cluster nodes. CPU, RAM, and network configure through the same UI you use for vSphere clusters today.

Migrate Workloads on Your Timeline

Stateless services first. Stateful workloads after validation. Rancher manages clusters on both vSphere and VergeOS for the duration of the migration. Retire vSphere when ready.

Already running stateful workloads on real production traffic.

The native Rancher and Kubernetes integration was developed with a European sports gaming platform serving as the design partner. The customer’s engineering team validated the CSI Driver, Cloud Controller Manager, and Rancher Node Driver against real production workloads during the MVP phase. The integration is approved for use in their production environment.

What that proves: the integration is real work, not a marketing slide. The same components that ship to GitHub today carry stateful traffic in a sports gaming environment that does not tolerate downtime.

| Component | Status |

|---|---|

| CSI Driver | Validated |

| Cloud Controller Manager | Validated |

| Cluster Autoscaler | GA on GitHub |

| Rancher Node Driver + UI Extension | Validated |

| K8s Distributions | RKE2 · K3s · Upstream |

| Production Deployment | Approved |

| License Model | Per Physical Server |

The five questions to answer before you commit.

Use this framework to evaluate VergeOS against your existing VMware-plus-Kubernetes stack — or against any other platform you are considering.

Does the platform run my existing Kubernetes distribution unchanged?

VergeOS does not introduce a Kubernetes distribution. RKE2, K3s, upstream Kubernetes, and vendor distributions all run on VergeOS through Rancher or directly on VergeOS VMs. The CSI driver and Cloud Controller Manager work with any cluster that meets the upstream Kubernetes specification.

If a platform requires you to adopt a specific distribution, you are trading one form of lock-in for another.

How does storage work for stateful workloads?

The VergeOS CSI driver delegates storage operations directly to the VergeOS API. Persistent volumes are vSAN volumes — with inline deduplication, multi-tier placement across NVMe / SSD / HDD, and snapshots that integrate with VergeOS system snapshots. A Kubernetes persistent volume snapshot is a vSAN snapshot, which means stateful workloads share the same DR and replication infrastructure that protects production VMs.

Compare to overlay storage solutions like Longhorn or Portworx, which run their own replicated storage engines on top of the hypervisor — doubling work, breaking dedup, and splintering management.

Can I run Kubernetes and traditional VMs on the same infrastructure?

Yes. Most enterprises run a mix of containerized applications and traditional VMs — databases that have not been containerized, packaged software supported only on VMs, legacy applications that predate the cloud-native era. VergeOS runs all of these workloads alongside Kubernetes clusters on shared infrastructure with shared storage and shared networking.

Kubernetes-only platforms force you to maintain separate infrastructure for these workloads. That is not a trade most enterprises want to make.

What does the migration actually look like?

Industry research describes the dominant Kubernetes migration pattern as parallel operation with iterative workload movement. VergeOS plus Rancher fits this pattern natively. New clusters land on VergeOS. Old clusters stay on vSphere. Rancher manages both for the duration of the migration. Workloads move on your timeline, starting with stateless services and finishing with stateful workloads after the new platform validates under real load.

For Tanzu customers, the migration includes reconstruction of StorageClasses (replacing SPBM-projected policies), networking plane replacement (replacing NSX-T), and developer experience continuity (typically Backstage, Argo CD, Tekton, and Crossplane in place of TAP). VergeOS is the platform built to absorb the work.

What changes for my application teams?

Nothing. Application teams continue to use Rancher, kubectl, and standard Kubernetes APIs. The substrate underneath changes. The contract above does not. Pods schedule the same way. Services expose the same way. Persistent volumes mount the same way. The integration is native at the platform layer, not visible to the application layer.

This is the entire point of the Rancher continuity argument. VergeOS slots in as a new substrate alongside vSphere, and after cutover becomes the substrate. Application teams see no change in their day-to-day interaction with Kubernetes.

Resources for the technical evaluation.

Kubernetes Without the VMware Tax

David Zarzycki demos end-to-end Rancher provisioning on VergeOS, plus migration patterns and live Q&A. May 20 at 1:00 PM ET.

Registerverge-io on GitHub

CSI driver, Cloud Controller Manager, Cluster Autoscaler, and Rancher node driver. All four components, all Helm charts, all generally available.

Browse the RepoSchedule a Demo

See Rancher provisioning a cluster on VergeOS against your specific environment. Bring your migration questions — we will answer them honestly.

Book TimeCommon questions, direct answers.

Is this real Kubernetes integration or a wrapper?

Native, not bolted on. VergeOS ships a CSI driver and a Cloud Controller Manager as Helm charts from the verge-io GitHub repository. The CCM provisions LoadBalancer services through VergeOS VNet NAT rules. The CSI driver delegates storage operations directly to the VergeOS API — provisioned volumes participate in the full vSAN feature set including inline dedup, multi-tier placement, and integrated snapshots.

Are you replacing Rancher?

No. Rancher remains the management plane. VergeOS replaces vSphere as the substrate underneath. The native Rancher node driver and UI extension provision clusters on VergeOS exactly the way Rancher provisions clusters on vSphere today — same flow, same forms, same operational model.

What Kubernetes distributions do you support?

RKE2 and K3s through the Rancher node driver. Upstream Kubernetes and other distributions through the standard CSI and CCM contracts. The integration is distribution-agnostic at the storage and networking layer — if your cluster meets the upstream Kubernetes specification, the components work.

Why not just use Longhorn or Portworx for storage?

Unified storage policy across VMs and pods. Stateful Kubernetes workloads share the same vSAN that protects production VMs — same snapshot semantics, same DR model, same deduplication, same UI. Overlay storage layers run their own replicated storage engine on top of the hypervisor, which doubles work, breaks dedup, and splinters management.

How do you handle migration from Tanzu (TKG)?

Phased, with Rancher managing both substrates during cutover. New clusters land on VergeOS. Existing TKG clusters stay on vSphere. Stateless services move first. Stateful workloads move after validation. The honest position: migration is a project, not a port. SPBM-projected StorageClasses and NSX-T networking need reconstruction. VergeOS is the platform built to absorb that work.

What about TAP and the developer experience layer?

Tanzu Application Platform v1.12 was designated long-term support in March 2026, so TAP technically remains supported. The investment thesis behind it has been undermined — Tanzu Platform SaaS reached End of Availability May 1, 2025, legacy Tanzu SKUs were retired in May 2024, and Broadcom has cut substantial workforce across the Tanzu organization. Most TAP shops migrate to a CNCF-native developer experience built on Backstage, Argo CD, Tekton, and Crossplane.

Can I run this on bare metal?

VergeOS itself runs on bare metal commodity hardware. Kubernetes clusters then run on VergeOS VMs — the enterprise pattern. This gives you live migration, integrated DR, snapshot-based recovery, and unified storage policy across containerized and traditional workloads. If you are running Kubernetes directly on bare metal today, this is the operational uplift path.

Ready to collapse the stack?

See Rancher provisioning a cluster on VergeOS the same way it does on vSphere today — live, on May 20. Or schedule a demo against your specific environment.